New Delhi, India | February 1, 2026

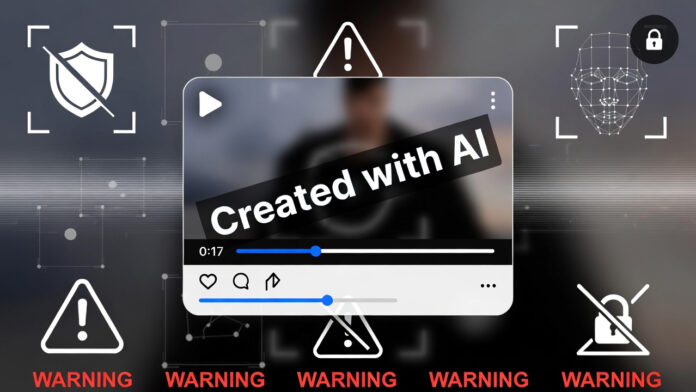

In a decisive move to curb the growing menace of deepfakes and AI-driven misinformation, the Ministry of Home Affairs (MHA) has issued new mandatory guidelines for social media influencers, making watermarking of AI-generated content a legal requirement.

The rules, which came into effect on February 1, 2026, are being described by officials as a strong preventive step to protect digital trust, public order, and individuals from reputational and financial harm.

🧠 Mandatory Watermark and Disclosure Norms

Under the new MHA guidelines, influencers and content creators must clearly disclose the use of artificial intelligence in any image, video, or audio content.

Key requirements include:

A visible “Created with AI” watermark on AI-generated photos or videos

Mandatory disclosure at the beginning and end of videos

No hidden or ambiguous labeling—disclosure must be clear to viewers

The directive applies across all social media formats, including reels, shorts, stories, and long-form videos.

⚖️ Legal Liability for Violations

The MHA has made it clear that failure to comply will attract strict legal consequences.

If an influencer shares AI-generated or deepfake content without disclosure, and it leads to:

Defamation

Public misinformation

Social unrest

Financial or reputational damage

they may face action under the Information Technology Act and the Bharatiya Nyaya Sanhita (BNS).

Officials said intent will be assessed, but negligence will not be treated lightly.

📱 Platforms Given 24-Hour Takedown Deadline

Social media platforms have also been brought under tighter compliance obligations.

As per coordination between the MHA and the Ministry of Electronics and Information Technology (MeitY):

Platforms such as X, Instagram, and YouTube

Must remove reported deepfake or manipulated AI content within 24 hours

Failure to act may lead to regulatory penalties

These measures align with updated intermediary guidelines issued by MeitY.

💳 Focus on Stopping AI-Driven Cyber Fraud

Officials said the move was driven largely by a spike in AI voice cloning and fake video calls, which have been used in recent months to carry out banking and identity fraud.

The Indian Cyber Crime Coordination Centre has flagged deepfake-enabled scams as one of the fastest-growing cyber threats in India.

Victims are advised to report such cases through the Cyber Crime Portal.

🔍 Why This Matters

Experts say these rules could significantly:

Reduce the spread of misleading AI-generated content

Protect public figures and private individuals from deepfake abuse

Restore trust in digital media and influencer-driven communication

The government has indicated that enforcement will be strict but progressive, with awareness drives planned for creators and platforms.