San Francisco, January 25, 2026

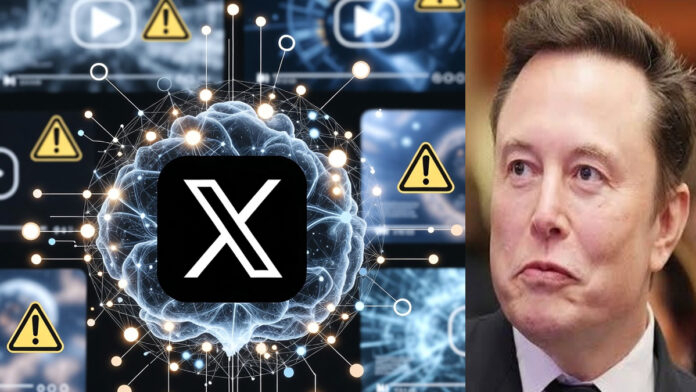

Elon Musk-owned social media platform X has announced the activation of a new artificial intelligence tool designed to detect and flag deepfake content within 30 seconds, marking a major step in the platform’s efforts to combat misinformation.

According to the company, the AI system will go live starting tonight, with a specific focus on identifying manipulated audio, video, and synthetic media that could mislead users during periods of international tension and upcoming elections.

How the New AI Tool Works

X said the system uses advanced machine-learning models trained on deepfake patterns, including altered facial movements, synthetic voice signatures, and inconsistencies in visual and audio data.

Once detected:

Suspicious content will be flagged within 30 seconds

Posts may carry context labels or warning notices

In high-risk cases, distribution may be limited pending review

The platform emphasized that the tool is designed for rapid response, addressing one of the biggest challenges in moderating viral misinformation.

Focus on Elections and Global Stability

The announcement comes at a time of heightened geopolitical tensions and a packed global election calendar, where deepfake videos and AI-generated audio clips have increasingly been used to spread false narratives.

X said the move is part of a broader initiative to:

Protect democratic processes

Reduce viral misinformation

Increase transparency around AI-generated content

The company has faced growing pressure from governments and regulators worldwide to strengthen safeguards against manipulated media.

Balancing Safety and Free Expression

X stated that flagged content will not be automatically removed in all cases. Instead, the platform aims to balance content safety with free expression, allowing human review where necessary.

The company also indicated that creators will be notified if their content is flagged and may be given options to appeal or provide context, depending on the case.

Industry-Wide Implications

Experts say X’s move could set a new industry standard for real-time deepfake detection on social media platforms, especially as generative AI tools become more accessible.

If successful, similar systems could be adopted across major platforms to curb the rapid spread of deceptive AI-generated content.